People are worried that AI will take everyone’s jobs. We’ve been here before.

In a 1938 article, MIT’s president argued that technical progress didn’t mean fewer jobs. He’s still right.

MIT Technology Review is celebrating our 125th anniversary with an online series that draws lessons for the future from our past coverage of technology.

It was 1938, and the pain of the Great Depression was still very real. Unemployment in the US was around 20%. Everyone was worried about jobs.

In 1930, the prominent British economist John Maynard Keynes had warned that we were “being afflicted with a new disease” called technological unemployment. Labor-saving advances, he wrote, were “outrunning the pace at which we can find new uses for labour.” There seemed to be examples everywhere. New machinery was transforming factories and farms. Mechanical switching being adopted by the nation’s telephone network was wiping out the need for local phone operators, one of the most common jobs for young American women in the early 20th century.

Were the impressive technological achievements that were making life easier for many also destroying jobs and wreaking havoc on the economy? To make sense of it all, Karl T. Compton, the president of MIT from 1930 to 1948 and one of the leading scientists of the day, wrote in the December 1938 issue of this publication about the “Bogey of Technological Unemployment.”

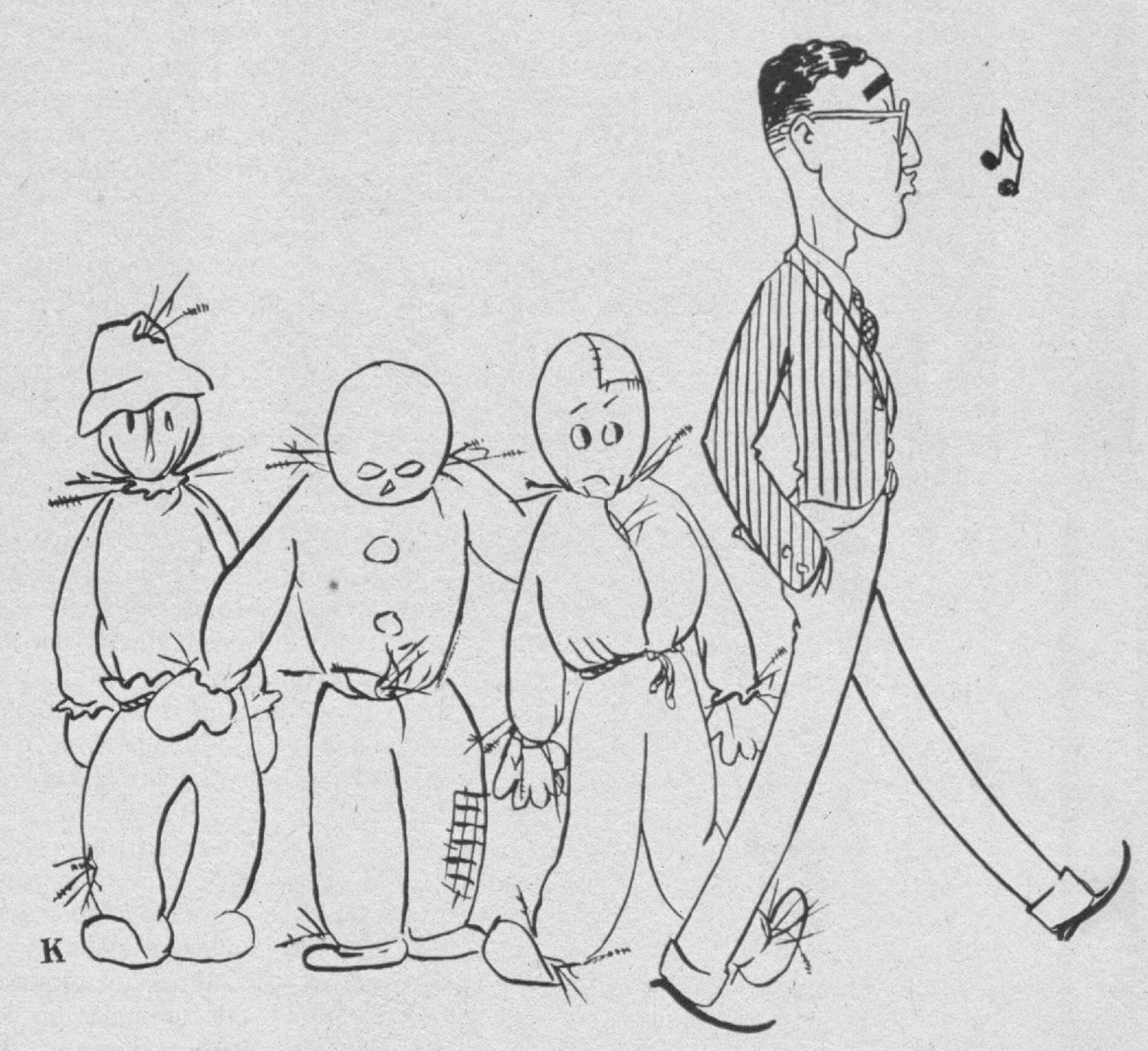

How, began Compton, should we think about the debate over technological unemployment—“the loss of work due to obsolescence of an industry or use of machines to replace workmen or increase their per capita production”? He then posed this question: “Are machines the genii which spring from Aladdin’s Lamp of Science to supply every need and desire of man, or are they Frankenstein monsters which will destroy man who created them?” Compton signaled that he’d take a more grounded view: “I shall only try to summarize the situation as I see it.”

His essay concisely framed the debate over jobs and technical progress in a way that remains relevant, especially given today’s fears over the impact of artificial intelligence. Impressive recent breakthroughs in generative AI, smart robots, and driverless cars are again leading many to worry that advanced technologies will replace human workers and decrease the overall demand for labor. Some leading Silicon Valley techno-optimists even postulate that we’re headed toward a jobless future where everything can be done by AI.

While today’s technologies certainly look very different from those of the 1930s, Compton’s article is a worthwhile reminder that worries over the future of jobs are not new and are best addressed by applying an understanding of economics, rather than conjuring up genies and monsters.

Uneven impacts

Compton drew a sharp distinction between the consequences of technological progress on “industry as a whole” and the effects, often painful, on individuals.

For “industry as a whole,” he concluded, “technological unemployment is a myth.” That’s because, he argued, technology "has created so many new industries” and has expanded the market for many items by “lowering the cost of production to make a price within reach of large masses of purchasers.” In short, technological advances had created more jobs overall. The argument—and the question of whether it is still true—remains pertinent in the age of AI.

Then Compton abruptly switched perspectives, acknowledging that for some workers and communities, “technological unemployment may be a very serious social problem, as in a town whose mill has had to shut down, or in a craft which has been superseded by a new art.”

Even those who agreed that jobs will come back in “the long run” were concerned that “displaced wage-earners must eat and care for their families ‘in the short run.’”

This analysis reconciled the reality all around—millions without jobs—with the promise of progress and the benefits of innovation. Compton, a physicist, was the first chair of a scientific advisory board formed by Franklin D. Roosevelt, and he began his 1938 essay with a quote from the board’s 1935 report to the president: “That our national health, prosperity and pleasure largely depend upon science for their maintenance and their future improvement, no informed person would deny.”

Compton’s assertion that technical progress had produced a net gain in employment wasn’t without controversy. According to a New York Times article written in 1940 by Louis Stark, a leading labor journalist, Compton “clashed” with Roosevelt after the president told Congress, “We have not yet found a way to employ the surplus of our labor which the efficiency of our industrial processes has created.”

As Stark explained, the issue was whether “technological progress, by increasing the efficiency of our industrial processes, take[s] jobs away faster than it creates them.” Stark reported recently gathered data on the strong productivity gains from new machines and production processes in various sectors, including the cigar, rubber, and textile industries. In theory, as Compton argued, that meant more goods at a lower price, and—again in theory—more demand for these cheaper products, leading to more jobs. But as Stark explained, the worry was: How quickly would the increased productivity lead to those lower prices and greater demand?

As Stark put it, even those who agreed that jobs will come back in “the long run” were concerned that “displaced wage-earners must eat and care for their families ‘in the short run.’”

World War II soon meant there was no shortage of employment opportunities. But the job worries continued. In fact, while it has waxed and waned over the decades depending on the health of the economy, anxiety over technological unemployment has never gone away.

Automation and AI

Lessons for our current AI era can be drawn not just from the 1930s but also from the early 1960s. Unemployment was high. Some leading thinkers of the time claimed that automation and rapid productivity growth would outpace the demand for labor. In 1962, MIT Technology Review sought to debunk the panic with an essay by Robert Solow, an MIT economist who received the 1987 Nobel Prize for explaining the role of technology in economic growth and who died late last year at the age of 99.

In his piece, titled “Problems That Don’t Worry Me,” Solow scoffed at the idea that automation was leading to mass unemployment. Productivity growth between 1947 and 1960, he noted, had been around 3% a year. “That’s nothing to be sneezed at, but neither does it amount to a revolution,” he wrote. No great productivity boom meant there was no evidence of a second Industrial Revolution that "threatens catastrophic unemployment.” But, like Compton, Solow also acknowledged a different type of problem with the rapid technological changes: “certain specific kinds of labor … may become obsolete and command a suddenly lower price in the market … and the human cost can be very great.”

These days, the panic is over artificial intelligence and other advanced digital technologies. Like the 1930s and the early 1960s, the early 2010s were a time of high unemployment, in this case because the economy was struggling to recover from the 2007–’09 financial crisis. It was also a time of impressive new technologies. Smartphones were suddenly everywhere. Social media was taking off. There were glimpses of driverless cars and breakthroughs in AI. Could those advances be related to the lackluster demand for labor? Could they portend a jobless future?

Again, the debate played out in the pages of MIT Technology Review. In a story I wrote titled “How Technology Is Destroying Jobs,” economist Erik Brynjolfsson and his colleague Andrew McAfee argued that technological change was eliminating jobs faster than it was creating them. This wasn’t just about a mill shutting down. Rather, advanced digital technologies were leading to job losses across a broad swath of the economy, raising the specter once again of technological unemployment.

Like the 1930s and the early 1960s, the early 2010s were a time of high unemployment.

It’s difficult to pinpoint a single cause for something as complex as a dip in total employment—it could be just a result of sluggish economic growth. But it was becoming increasingly obvious, both in the data and in everyday observations, that new technologies were changing the types of jobs in demand—and while that was nothing new, the scope of the transition was troubling, and so was the speed at which it was happening. Industrial robots had killed off many well-paying manufacturing jobs in places like the Rust Belt, and now AI and other digital technologies were coming after clerical and office jobs—and even, it was feared, truck driving.

In his farewell speech before leaving office in January 2017, President Barack Obama spoke about “the relentless pace of automation that makes a lot of good middle-class jobs obsolete.” By that time, it was clear that Compton’s optimism needed to be rethought. Technical progress was not turning out to lead to inevitable job growth, and the pain was not limited to a few specific locations and industries.

Why Musk is wrong

In an interview late last year with the UK prime minister, Rishi Sunak, Elon Musk declared there will come a time when “no job is needed,” thanks to an AI “magic genie that can do everything you want.” Musk added that as a result, “we won’t have universal basic income, we’ll have universal high income”—apparently answering Compton’s rhetorical question about whether machines will be “the genii which … supply every need and desire of man.”

It might not be possible to prove Musk wrong, since he gave no timeline for his utopian prediction; in any case, how do you argue against the power of a magical genie? But the end-of-work meme is a distraction as we figure out the best way to use AI to expand the economy and create new jobs.

Breakthroughs in generative AI, such as ChatGPT and other large language models, will likely transform the economy and labor markets. But there’s no convincing evidence that we’re on a path to a jobless future. To paraphrase Solow, we should worry about that when there’s a problem to worry about.

Even a bullish estimate about the effects of generative AI by Goldman Sachs puts its impact on productivity growth at around 1.5% a year over the next 10 years. That, as Solow might say, is nothing to sneeze at, but it’s not going to end the need for workers. The Goldman Sachs report calculated that roughly two-thirds of US jobs are “exposed to some degree of automation by AI.” Yet this conclusion is often misinterpreted—it doesn’t mean all those jobs will be replaced. Rather, as the Goldman Sachs report notes, most of these positions are “only partially exposed to automation.” For many of these workers, AI will become part of the workday and won’t necessarily lead to layoffs.

The end-of-work meme is a distraction as we figure out the best way to use AI to expand the economy and create new jobs.

One critical wild card is how many new jobs will be created by AI even as existing ones disappear. Estimating such job creation is notoriously difficult. But MIT’s David Autor and his collaborators recently calculated that 60% of employment in 2018 was in types of jobs that didn’t exist before 1940. One reason innovation has created so many new jobs is that it has increased the productivity of workers, augmenting their capabilities and expanding their potential to do new tasks. The bad news: this job creation is countered by the labor-destroying impact of automation when it’s used to simply replace workers. As Autor and his coauthors conclude, one of the key questions now is whether “automation is accelerating relative to augmentation, as many researchers and policymakers fear.”

In recent decades, companies have often used AI and advanced automation to slash jobs and cut costs. There’s no economic rule that innovation will in fact favor augmentation and job creation over this type of automation. But we have a choice going forward: we can use technology to simply replace workers, or we can use it to expand their skills and capabilities, leading to economic growth and new jobs.

One of the lasting strengths of Compton’s 1938 essay was his argument that companies needed to take responsibility for limiting the pain of any technological transition. His suggestions included “coöperation between industries of a community to synchronize layoffs in one company with new employment in another.” That might sound outdated in today’s global economy. But the underlying sentiment remains relevant: “The fundamental criterion for good management in this matter, as in every other, is that the predominant motive must not be quick profits but best ultimate service of the public.”

At a time when AI companies are gaining unprecedented power and wealth, they also need to take greater responsibility for how the technology is affecting workers. Conjuring up a magical genie to explain an inevitable jobless future doesn’t cut it. We can choose how AI will define the future of work.

Deep Dive

Business

Let’s not make the same mistakes with AI that we made with social media

Social media’s unregulated evolution over the past decade holds a lot of lessons that apply directly to AI companies and technologies.

The era of cheap helium is over—and that’s already causing problems

Helium is crucial to all kinds of technologies, including MRI scanners and semiconductors. But it’s produced in only a few places.

The world’s most famous concert pianos got a major tech upgrade

Steinway's Spirio pianos let you experience performances by piano virtuosos in your living room.

How the internet pushed China’s New Year red packet tradition to the extreme

Chinese tech companies give out millions of dollars in red packets every Lunar New Year. But users have to earn them through a series of complex tasks.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.